Sriramprabhu Rajendran is a Senior Engineering Leader with over two decades of experience building large-scale, distributed enterprise systems, with deep expertise in the financial services domain. His current focus lies at the intersection of Generative AI and enterprise architecture, where he designs and implements agentic AI systems that move beyond conversational interfaces to orchestrated, outcome-driven workflows.

With a strong foundation in cloud-native platforms, event-driven architectures, and microservices, Sriram specializes in translating complex business processes into scalable, resilient, and measurable systems. His work emphasizes deterministic orchestration, domain-specialized agents, and production-grade AI implementations that deliver tangible business impact.

He brings a unique combination of technical depth and strategic leadership, with a proven track record of delivering high-value programs, building high-performing teams, and driving innovation across organizations. Through his work, he continues to shape how enterprises adopt AI—not just to answer questions, but to execute work at scale.

The Chatbot Trap

In conversations with engineering leaders, a familiar pattern keeps emerging around AI deployments. Teams invest months perfecting conversational interfaces, ensuring they are smooth, intuitive, and demo-ready. The result often looks impressive: systems that answer questions, summarize documents, and perform seamlessly in front of stakeholders. But once deployed, a critical gap becomes evident—these systems rarely translate into meaningful execution.

“What looks impressive in a boardroom often fails in production—because answering questions is not the same as getting work done.”

author added

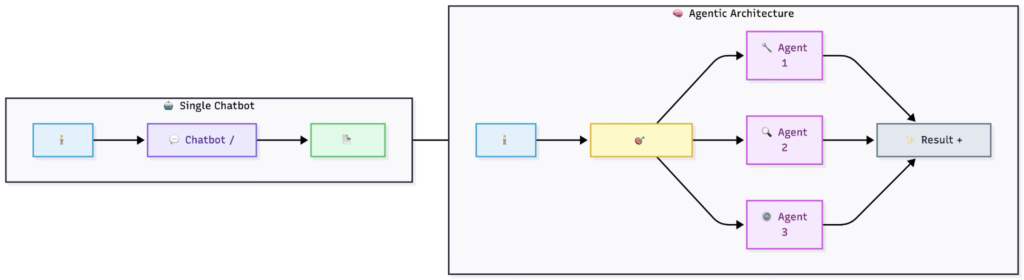

This is what can be described as the “Chatbot Trap.” Organizations deploy sophisticated language models, only to end up with highly polished interfaces that function more like advanced FAQ engines. Meanwhile, the complex, costly, and coordination-heavy workflows that truly drive business outcomes remain largely untouched.

“You don’t need a better chatbot—you need systems that actually execute.”

When AI is limited to answering questions rather than executing tasks, a significant portion of its potential value remains unrealized.

Agents, Not Oracles

The big shift in 2026 isn’t bigger models or not even better prompts, the big shift is moving from “ask it a question, get an answer” to “orchestrate multiple agents with AI that doesn’t just talk, but works.”-Author added.

Consider for a moment the process of a normal enterprise workflow. For instance, a request is made to review a contract across multiple jurisdictions or to conduct a compliance process and retrieve data from three different systems. Today, this request flows between people and systems and spreadsheets. Someone is coordinating this request. Someone is waiting for something to happen. Someone is following up on the request.

But multiply this by hundreds of requests per month, and you now have a small army of people whose only function is to route these requests, not complete the request.

But now imagine if you were to replace this layer of coordination and these people and these spreadsheets and these emails with a number of highly specialized artificial intelligence agents. One agent understands the request and can break down the request into smaller tasks. Another agent is now retrieving data from your systems of record. Another agent is now applying domain-specific rules such as legal constraints and regulatory requirements and formatting requirements. Another agent is now detecting exceptions and determining when a human should be brought into the process.

“Most AI deployments don’t fail due to lack of intelligence—they fail due to lack of orchestration.”

This is not science fiction. Teams of people are already doing this in production for document processing, contract management, and regulatory workflows. The results have not been incremental. I have seen cycle times reduced from weeks to days, with the majority of manual coordination disappearing.

Three Architectural Pillars That Actually Work

Having spent time developing these systems, as well as assessing how others develop them, there are three design principles that, in the end, differentiate the systems that deliver from those that do not.

1. Vertical Specialization Over Monolithic Intelligence

Stop trying to use one model for everything. The pattern that works is a set of narrow, well-scoped agents, each with well-defined knowledge boundaries and responsibilities.

One agent is responsible for interpreting HR policy. Another agent is responsible for legal compliance checks. Yet another agent is responsible for the logic behind financial forecasting. And the reason they’re not trying to be experts in areas they were not built for is that they’re each grounded in their own knowledge base, think RAG with well-scoped vector stores.

“A well-designed agent doesn’t try to know everything—it knows exactly what it is responsible for.”

Why is this important? The reason this is important is that when you ask a general-purpose model to perform a specialized task, the answer it gives you is correct, sounds good, but doesn’t take into account the domain-specific nuances. The specialized agent, by contrast, is accurate and auditable because it’s got guardrails around it. You can version it, test it, monitor it, just like any other microservice.

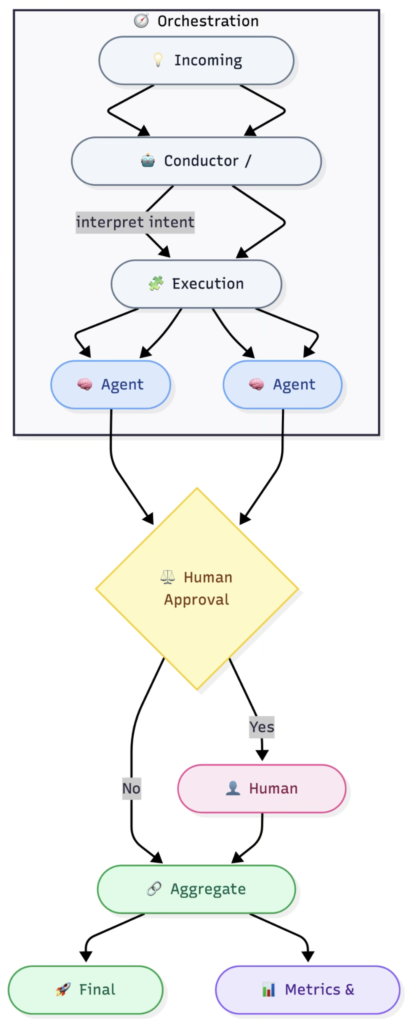

2. Deterministic Orchestration

Here is where most agentic approaches fail to work well, as they put the decision of the workflow in the hands of the AI. The LLM makes the decision on which tool to invoke, in what order, and with what parameters, and the whole system is a black box that works beautifully in the demo and fails in production in ways you cannot predict.

“Deterministic orchestration turns AI from a black box into a reliable enterprise system.”

The alternative is to have a deterministic orchestration. This means you create a central engine, or conductor, which understands the intent of the user and determines the execution plan before any agent is invoked. This conductor understands the dependency graph of the agents, which ones need to be invoked first, which ones can be invoked in parallel, and so on.

The heavy cognitive lifting is still done by the AI within each step. But the flow—the sequencing, the error handling, the retry logic—is engineered, not improvised. You know how to build event-driven systems using Kafka or design saga patterns for distributed transactions? It’s the same principles applied to a different application.

Measurable Outcomes, Not Vibes

If someone tells me their AI solution has “improved efficiency” or “enhanced user experience” without any quantification, I get nervous. You can’t measure it? You can’t defend it? You certainly can’t scale it!

“If you can’t measure AI outcomes, you can’t scale them.”

The metrics that matter for agentic systems are tangible: cycle time reduction, manual hours saved, error rates pre- and post-implementation, cost per transaction. When an orchestrated workflow reduces a multi-week evaluation cycle to a few days, it’s not a nebulous productivity improvement; it’s an 80-85% cycle time reduction that has a direct link to headcount optimization, accelerated revenue recognition, or reduced compliance risk.

Set your baselines before you deploy. Measure everything. And communicate your results in a language your CFO understands, rather than tokens per second.

What This Looks Like in Practice

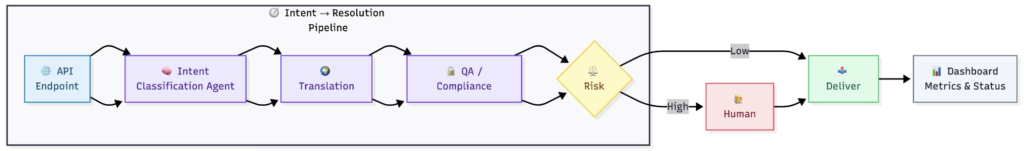

Let me illustrate a specific case. Suppose that you are managing a large-scale operation that involves processing thousands of standardized documents—contracts, policies, compliance filings in dozens of languages. In the old process, a coordinator would receive a request, farm it out to translators, then send it through a legal process, and finally track the status of the process in a spreadsheet.

In an agentic architecture, the request will reach an API endpoint. An intent classification agent will identify the document type and languages of interest. The translation agent will perform the heavy lifting of translation using domain-specific terminology models instead of generic machine translation models. The quality assurance agent will perform automated tests against compliance rules. The routing agent will decide whether human review is needed based on risk scoring models. And finally, the entire process will report back on status, cost, and quality metrics to a dashboard in real time.

What about the role of a coordinator? Well, it doesn’t exist anymore. Not because someone has replaced a human coordinator with AI, but simply because the work of coordinating wasn’t really valuable work to begin with. The domain experts are now able to focus on the exceptions that really need their expertise.

The Hard Part Nobody Talks About

The easy part is building the agents. Trust me when I say that with the current state of tooling, using LangChain, CrewAI, AutoGen, or even a homegrown solution on top of Spring Boot and Kafka, it’s a matter of days, not months, to get a multi-agent prototype running.

“Building agents is easy. Building production-ready systems around them is the real challenge.”

The hard part is everything else:

- Data Access and Governance: Your agents need to read from and write to enterprise systems. That means dealing with IAM policies, data classification rules, and API rate limits not just wiring up a simple REST call.

- Observability: How will you debug when an agentic workflow produces the wrong output? You need to know which agent made which decision with what context and at what step of the workflow. Distributed tracing X-Ray or OpenTelemetry is not a nice-to-have; it’s a must-have.

- Testing: How do you regression test a system when the AI’s output is non-deterministic? You build deterministic scaffolding around non-deterministic components. Pin your orchestration logic. Snapshot test your agent’s output. Chaos test your fallbacks.

- Human-in-the-loop design: Not all decisions need to be made by AI. The art is in deciding when to insert human checkpoints without reintroducing the bottlenecks you just removed.

Where This Is Headed

The winners of the next 18 months are not going to be the companies with the best chatbots. They’re going to be the companies that figured out how to break down their most expensive workflows into orchestrated agent pipelines with clear boundaries, solid observability, and measurable ROI.

“The future of AI in the enterprise is not better prompts, it’s better plumbing.”

The future of AI in the enterprise is not about better prompts. It’s about better plumbing. And for those of us who’ve spent years building distributed systems, event-driven systems, and API-first platforms, that’s actually good news. It’s a transfer of skills. It’s a transfer of patterns. It’s a massive opportunity.

“Stop trying to get your AI to answer questions. Start trying to get your AI to do the work”.

Key Take Aways

We’re clearly moving from a world where AI just answers questions to one where it actually gets work done. That shift, from chatbot demos to real, production-grade systems, is where agentic orchestration comes in.

Curious how others are approaching this, are you still managing workflows through inboxes and spreadsheets, or starting to break them into agent-driven pipelines?

Would love to hear what’s working (and what’s not) in your experience with AI agents in production.